A few weeks back at our Product AI Summit, one question kept surfacing across the agenda, even when the speaker on stage wasn't trying to ask it. It's the same question quietly sitting on most product teams' plates right now.

If AI is making us ship faster, are we sure we're shipping the right things?

Brennan McEachran's session sharpened a version of this that stuck with us. It's the question this post is about.

The build half of your product loop is moving faster. The feedback half is moving at about the same speed it always did.

Build Cycles Got Faster. Your Feedback Loop Didn't.

AI's productivity gains live almost entirely on one side of the development cycle.

Look at where AI has actually changed your day. Spec drafting, faster. Prototyping, faster. Writing code, deploying it, putting the docs together after the fact, all of it faster. If you've spent the last year watching your team adopt these tools, you've watched the time-to-ship curve bend.

Now look at the other side. After the feature ships, what's faster? How long does it take you to know whether real users find the new flow confusing, whether the AI-drafted copy is doing its job, whether anyone's even opening the thing you spent three days building?

Be honest. Probably about as long as it took two years ago.

That gap is the story.

Why Teams Have Always Skipped Post-Launch Validation

Post-launch validation is the work most teams have always under-invested in, and AI raises the price of doing that.

Centercode has been in this corner of the work for 25 years, and the pattern hasn't moved much. Teams ship something. Attention swings to whatever's next on the roadmap. Whatever was supposed to happen after launch, the structured collection of how it actually landed with users, gets handled in a Slack thread on a Friday afternoon. Or skipped.

In our experience, the reasons stay the same:

- Launch fatigue is real. By the time something's out the door, the team's already been promised to the next initiative.

- Feedback collection used to be expensive. Recruiting users, building intake, sorting through what came in, all of it took weeks.

- Attribution was always messy. Hard to prove what specific learning came from what specific post-launch effort.

- Post-launch work rarely shows up on anyone's quarterly objectives.

None of these reasons are new. They've just been quietly costing teams the whole time. Most teams could absorb the cost because the pace of shipping gave them room to. A miss on a quarterly release was painful but recoverable.

That math has changed.

What Faster Shipping Changes

When you ship weekly instead of quarterly, the cost of guessing compounds.

Run the numbers in your head. If your team used to ship a meaningful change every six weeks and now you're shipping one a week, you've roughly six-x'd the number of decisions you're making per quarter. Each of those decisions is a small bet on what users want.

A bet without feedback is a guess.

A guess stacked on the last guess, six weeks deep, with no signal coming back, is how teams end up surprised by their numbers in Q4. Quarterly misses used to cost you a quarter. Weekly misses, without a working feedback loop, drift across the next ten ships before anyone catches the pattern.

Output gains are the easy story to tell. The teams that win this era will be the ones with the shortest ship-to-signal time.

What A Working Feedback Loop Looks Like At Pace

Closing the loop is a discipline problem before it's a tooling problem.

You don't need anything exotic. The teams we see doing this well share a few habits.

Test with people outside your team

Your design team poking around in staging counts as QA. They share your mental model already, so the signal you need has to come from people who don't. In practice, this is a small panel of real users you can activate inside a week, drawn from a pool that's already opted in. Cold-recruiting fresh testers after every ship doesn't scale to weekly cycles.

Build a deliberate listening window for every meaningful ship

Hoping someone will complain if there's a problem doesn't qualify as a system. You need scheduled intake with a name attached to what comes back. That looks like a defined 7 to 14 day window after launch, a clear way for users to send feedback (in-product prompts work, so do quick surveys), and time on someone's calendar to review what comes in. Without that, you're hoping someone happens to tell you the right thing at the right time.

Get a meaningful read inside seven days

If you ship Monday and hear nothing until the next sprint review, the loop is too long for the pace you're shipping at. Sooner is better. A meaningful read combines usage data with qualitative feedback. Usage shows whether people are using the feature and sticking with it. Qualitative shows what they think when they hit friction. The first 72 hours tell you whether the launch is broken at a basic level. The first week tells you whether the work actually did what you hoped.

Assign a human to receive what comes back

This is where most loops break. Feedback shows up, lands in a channel, and dies there because nobody owns the response. The owner's job is reviewing submissions on a schedule and routing the real ones to whoever can act on them. That person is rarely the PM who just shipped, since their attention is already on the next thing. A feedback manager works for this. So does a rotating role on the team. The name is what makes the loop run.

If that list feels obvious, it's because it is. Designing a loop has never been the hard part. The hard part is running one consistently when the next thing is already in flight.

Common Questions About Feedback Loops At AI-Era Pace

A few of the questions that keep coming up in conversations with product teams adjusting to faster shipping cycles.

How fast does a feedback loop need to be?

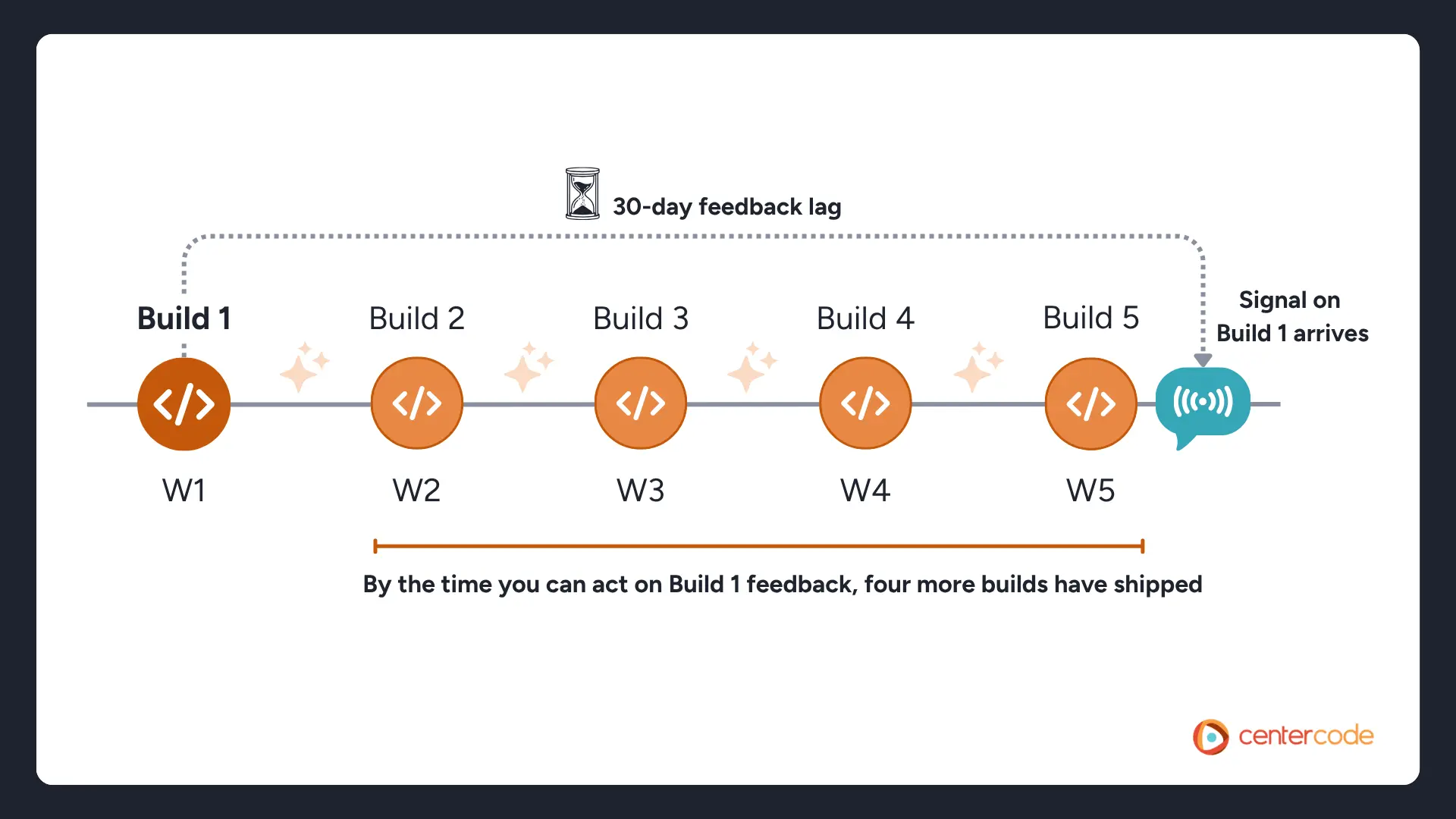

Faster than your shipping cadence. If you ship weekly but feedback takes 30 days to reach you, you've shipped four more things by the time you can act on what you learned. Rough rule: ship-to-signal time should be shorter than ship-to-ship time. For weekly cycles, that means a meaningful read inside the first 7 days post-launch.

What counts as a meaningful post-launch read?

Enough signal to know whether the feature is working as intended. Usage data shows whether people are actually using it. Qualitative feedback shows whether they like using it once they do. A single metric like NPS or activation rate by itself rarely tells you enough.

Who should own the feedback loop?

Not the PM who just shipped. Their attention is already on the next thing, and they're the wrong person to evaluate signal on something they just built. A customer experience lead, a feedback manager, or a rotating role across the team all work. The specific role matters less than the calendar time and the authority to route what comes in.

What's the smallest version of a working feedback loop?

You need:

- A defined listening window of 7 to 14 days post-ship

- A clear way for users to submit feedback

- A named owner reviewing what comes in on a schedule

- A path for routing real issues to whoever can act on them

Tools and frameworks help at scale, but the loop itself doesn't need much to start working.

Wrapping Up

A lot of the AI conversation this year is about output gains. Those gains are real. They're also only half of what determines whether the work was worth doing.

The teams that look back on 2026 as a turning point will be the ones who, while everyone else raced to ship more, kept investing in the unglamorous half of the loop. Output is the easy thing to measure. Whether anything you shipped actually landed with real users is the harder, more important question, and it's gotten harder and more important at the same time.

If you're rebuilding your feedback loop for the pace your team is shipping at now, that's the problem Centercode's platform was built for.